I’ve had some thoughts about a change to the way Anki handles ease factors.

UPDATE: 2018-01-08

I’ve shared out the experimental addon that does exactly what I describe in this article. See experimentalCardEaseFactor on Github.

In a nutshell

Instead of simply increasing the ease factor a little when the user marks a review as Easy and decreasing it when they mark the review as Again or Hard, set target success rate of 85% for all reviews of each card and adjust the ease factor according to the below formula.

New Ease = Avg Historical Ease * log(0.85) / log(historical succes rate)

Where, Ease is the ease factor of the card, 0.85 is the desired 85% success rate, and the historical success rate is the ratio of reviews that were answered Hard, Good, or Easy.

To prevent this equation from becoming too aggressive:

- If historical success rate is 100%, or 0%, set it to 99% or 1% respectively to avoid divide by zero or log(0) errors.

- Cap the maximum possible adjustment at +/- 20% to prevent the overly aggressive values possible with this equation.

- The Interval Modifier setting must remain at 100%

Intervals would still be calculated and cards scheduled according to the current procedures.

Some background.

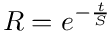

The above equation is derived from a model of Ebbinghaus’s forgetting curve. You can find the original equation in the Wikipedia article for The Forgetting Curve.

From this, you can derive that first formula. I actually did this myself once, so I know it’s valid. If I remember correctly, when you work this out, you end up with some natural logs, but because you have a log divided by another log, it actually doesn’t matter which base you use.

Basically, you need to solve for the ratio of t2 to t1 where strength (S) is a constant. This gives you a ratio of how much longer or shorter the interval should have been. Since Anki calculates intervals by simply multiplying the last interval by the ease factor, you can just adjust the ease factor by that ratio and presto!

The Anki Manual describes essentially this same formula in the Deck Options » Reviews section, but there it is used to calculate a deck-wide interval modifier. Here, I’m suggesting that we apply this modifier directly to the ease factor of a card on a card-by-card basis rather than a deck-wide adjustment. (Actually, the interval modifier applies to all cards within the deck options group; several decks can share the same options group.)

This equation seems to create some nice reasonable adjustments when you’re close to the desired success rate. Unfortunately, if you’re current success rate is close to 0 or close to 100%, the adjustments become exponentially large. So we have to constrain them.

I think limiting the adjustments to no more than 20% up or down is a reasonable way to keep the adjustments from getting too extreme. As the success rate gets closer and closer to the desired 85%, the adjustments become smaller and smaller.

From decks to cards

I used to use this formula to adjust the interval modifier of a deck. I’d figure out what the current success rate was deck-wide, and come up with an interval modifier to adjust intervals to hit that 85% target.

The interval modifier of a deck options setting is a percentage. All intervals are multiplied by the interval modifier. It’s a way to basically increase or decrease all the intervals with one setting.

But at some point, I realized that instead of looking at the success rate of a deck, I could look at the past reviews for a single card and use that to calculate the card’s success rate. Then I could adjust the card’s individual ease factor to try to hit my target of 85%.

If we’re adjusting each individual card like this, then we should set that interval modifier to 100% (no change). That way, the ease factors for each card won’t be affected by the other cards in the deck.

Looking at a single card instead of a deck-full of cards means that we can use this equation to adjust a card’s ease factor individually. The outliers don’t get caught up in an interval modifier based on a deck-wide average.

Differences from the current method

The current method of adjusting ease factors is to simply add or subtract 150 from the ease factor when you answer a review with Easy, or Hard and to not make any change for reviews answered Good. If you forget a card, Anki subtracts 200 from the ease factor.

There’s no adjustment towards a goal. As long as you keep hitting Good, we just stick with the ease factor the card already has.

The result is that a card’s ease factor adjusts to whatever range of ease (or laziness) causes you to mark the card as Good. Unless you’re a very disciplined and consistent person, this range is likely to be pretty wide, and the boundaries are likely to move drastically with your mood.

Anki effectively tries to keep each card in the subjective Good range forever. Forgetting means something went wrong and we have to lower the ease factor and we only increase the ease factor if the card is so easy that you can be bothered to mark it as Easy.

The formula above would work in a similar way, but focused on your overall success rate, rather than your most recent performance.

Forgetting would result in a decreased ease factor, and remembering would result in an increased ease factor. However, if you keep hitting Good, the ease factor will keep increasing until you forget. Forgetting about 15% of the time would be an expected outcome rather than something we try to avoid.

By using the formula above, we would also make much faster adjustments towards our goal of 85% success. Even with a cap of a 20% change up or down, that’s often going to be larger than Anki’s 150 or 200 points. When we’re close to our target, the adjustments will be much finer, while Anki’s are stuck at +/- 150 or -200.

How bad is forgetting?

Anki noobs often think that Anki’s algorithm tries to schedule a card for just before you forget it. And that’s to be expected given that the Anki website says essentially the same thing.

Remember Efficiently: Only practice the material that you’re about to forget. (apps.ankiweb.net)

As I hope I’ve showed to you, that’s bullshit. Anki tries to schedule a review for a time when you’ll still be willing to mark the card as Good. That’s it. It’s a very imprecise and subjective target.

I think there are two assumptions lurking in the background here.

- It’s better to review right before you forget rather than right after.

- Remembering is binary; you either remember or forget and there is no in between.

I’m skeptical about assumption #1. Maybe it’s true, and maybe it’s not. There’s definitely a benefit to reviewing something that you’ve just forgotten. And I don’t think the research has found that one is actually much better than the other. What is probably true is that it’s psychologically more rewarding if you’re remembering most of your reviews… exactly why I aim for an 85% success rate.

And I happen to think #2 is complete bullshit. I can’t say this with any authority, but it seems to me that every time you try to remember something, it’s a game of probability. If you know it well, your probability of remembering it is high; if you don’t know it well, the probability is low. At least that’s how it seems to me based on my personal experience.

I don’t think we really know how the mind retrieves a memory, but it seems to me that sometimes you get lucky and you don’t know how you possibly remembered something and other times you can’t recall something even though it’s on the tip of your tongue.

There’s a theory from Dr. Bjork of UCLA called the New Theory of Disuse that might explain some of these memory oddities. According to his model, every memory has two strengths; a storage strength and a retrieval strength.

If your storage strength is low, but your retrieval strength is high for something, you’ll be able to remember it, but you’ll soon forget it because retrieval strength fades quickly. Retrieval strength is what Ebbinghaus was mostly charting with his forgetting curve.

But if your storage strength is high, but your retrieval strength low for a memory, it’ll be hard to remember even though you know that you know it. That’s when things feel like they’re on the tip of our tongue.

The way I interpret it, if retrieval is at 85%, you have an 85% chance of remembering something; but a 15% chance that you’ll fail. But storage strength influences how quickly retrieval strength fades; as things become more and more familiar, your ability to retrieve them fades slower and slower.

Down to two options

With this equation, it won’t matter whether you choose Easy, Good, or Hard. The success rate over time will tell us whether the ease factor is too easy (over 85% success), or too hard (under 85% success). We don’t have to think about it anymore. We can just select between Again and Good; a simple binary choice between getting a card right and getting it wrong.

This frees up all that mental energy previously devoted to choosing among the three buttons. You can now use it for what you should be using it for; remembering the cards and getting through review sessions.

The downside

One downside I can see is that for young cards, we’ll have a very small sample size. One good review out of a total of one reviews is a 100% success rate and we’d increase the ease factor by 20% because of this.

This means that we could see a lot of whip-sawing up and down in the ease factor of young cards before they settle in to a good range. The good news is that this won’t affect the small intervals of young review cards nearly as much as it would affect the intervals of more mature cards.

On the other hand, Anki’s current system means that we often spend a very long time slowly creeping towards an appropriate ease factor.

One other potential problem is that if you’ve been using an interval modifier, then the ease factor in the reviews database isn’t really the ease factor that was used. Instead, the effective ease factor was the ease factor listed in the database times the interval modifier in effect at that time (which is not listed).

However, the effective interval modifier can be deduced by taking the interval and dividing it by the previous interval; both of which are listed in the reviews database. You then have to multiply by 1000, because of the way that Anki stores these values (a 250% factor is stored as 2500 in Anki).

A great resource for understanding Anki’s database structure is the AnkiDroid Database Schema page on Github. But also, compare that to the section on Manual Analysis in the Anki manual.

What about all that forgetting?

Since the system is geared towards ensuring a 15% failure rate, you might be worried about lapses; and with good reason.

Anki’s default way of dealing with lapses is that after forgetting a card and relearning it, the card starts off with an interval of one day and has to work its way back up all over again.

This is a shitty way to handle it and I’ve gone into detail about that in another post. To sum it up, just because you couldn’t remember something doesn’t mean you need to start all over again as if you never knew it in the first place.

So with this system, we’d have to set the new interval of lapsed cards to a percentage that would, on average, give us an 85% success rate on subsequent reviews.

This can be automated through an addon, but that’s a story for another time and another post…